An autonomous robot ping-pong player dubbed Ace has achieved a milestone for AI and robotics in Tokyo by competing against and sometimes defeating top-level human players at table tennis, a feat that could presage an array of other applications for similarly adept robots.

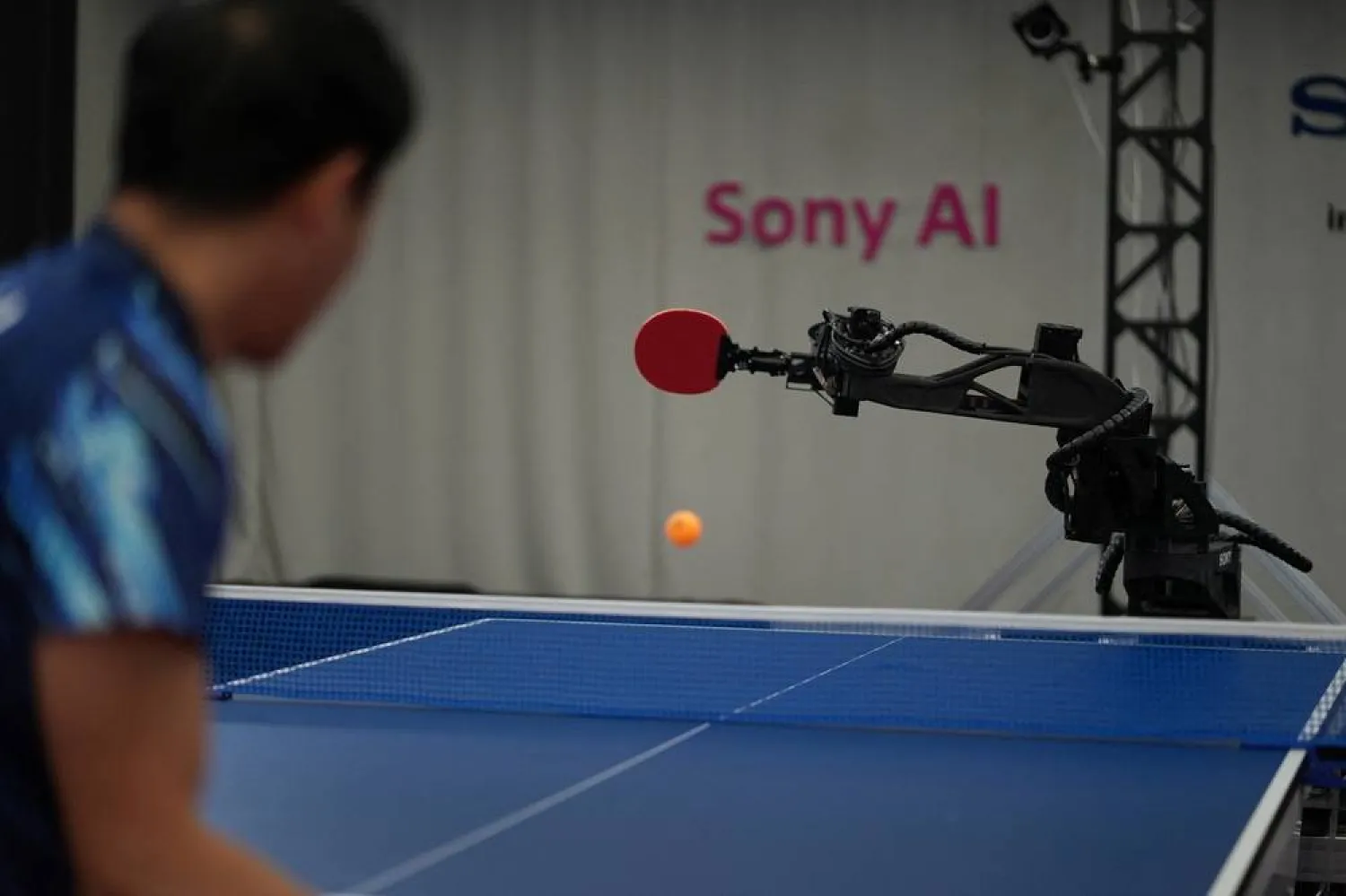

Ace, created by the Japanese company Sony's AI research division, is the first robot to attain expert-level performance in a competitive physical sport, one that requires rapid decisions and precision execution, the project's leader said. Ace did so by employing high-speed perception, AI-based control and a state-of-the-art robotic system.

There have been various ping-pong-playing robots since 1983, but until now they were unable to rival highly skilled human competitors. Ace changed that with its performances against human elite-level and professional players in matches following the rules of the International Table Tennis Federation, the sport's governing body, and officiated by licensed umpires.

"Unlike computer games, where prior AI systems surpass human experts, physical and real-time sports such as table tennis remain a major open challenge due to their requirements for fast, precise and adversarial interactions near obstacles and at the edge of human reaction time," said Peter Dürr, director of Sony AI Zurich and leader for Sony AI's project Ace.

The project's goal was not only to compete at table tennis but to develop insights into how robots can perceive, plan and act with human-like speed and precision in dynamic environments, Dürr said.

"The success of Ace, with its perception system and learning-based control algorithm, suggests that similar techniques could be applied to other areas requiring fast, real-time control and human interaction - such as manufacturing and service robotics, as well as applications across sports, entertainment and safety-critical physical domains," said Dürr, lead author of a study describing Ace's achievements published on Wednesday in the journal Nature.

In matches detailed in the study, Ace in April 2025 won three out of five versus elite players and lost two matches against professional players, the top skill level in the sport. Sony AI said that since then Ace beat professional players in December 2025 and last month.

Companies worldwide are making advances with robots. On Sunday, for instance, robots outran human runners in a half-marathon race in Beijing.

'A BLUR TO THE HUMAN EYE'

AI systems already have excelled in digital domains in strategy games such as chess and Go and at complex video games.

While video games take place in simulated environments, table tennis requires rapid decision-making, precise physical execution and continuous adaptation to an unpredictable opponent, Dürr said. The ball moves at high speeds with complex spins and trajectories, pushing humans and robots to operate at the limits of sensing, prediction and motor control, Dürr said.

Ace's architecture integrates nine synchronized cameras and three vision systems to track a spinning ball with exceptional accuracy and speedy processing time.

"This is fast enough to capture motion that would be a blur to the human eye," Dürr said.

The researchers developed a custom robot platform featuring eight joints. This was, Dürr said, the minimum number necessary to execute competitive shots: three for the racket's position, two for its orientation and three for the shot's speed and strength.

Mayuka Taira, a professional table tennis player who lost a match to Ace last December, said in comments provided by Sony AI that the robot's strengths "are that it is very hard to predict, and it shows no emotion."

"Because you can't read its reactions, it's impossible to sense what kind of shots it dislikes or struggles with, and that makes it even more difficult to play against," Taira said.

Rui Takenaka, an elite-level player who has won and lost matches against Ace, said in comments provided by Sony AI: "When it came to my serve, if I used a serve with complex spin, Ace also returned the ball with complex spin, which made it difficult for me. But when I used a simple serve - what we call a knuckle serve - Ace returned a simpler ball. That made it easier for me to attack on the third shot, and I think that was the key reason why I was able to win."

Ace has room for improvement, Dürr said.

"Ace has a superhuman ability to read the spin of incoming balls, and superhuman reaction time. As it learns to play not from watching humans play, but is trained by itself in simulation, it also reacts differently from human players and creates surprising situations," Dürr said. "At the same time, professional human athletes are very good at adapting to their opponent and finding weaknesses, which is an area that we are working on."