Tech giant Meta urged Australia on Monday to rethink its world-first social media ban for under-16s, while reporting that it has blocked more than 544,000 accounts under the new law.

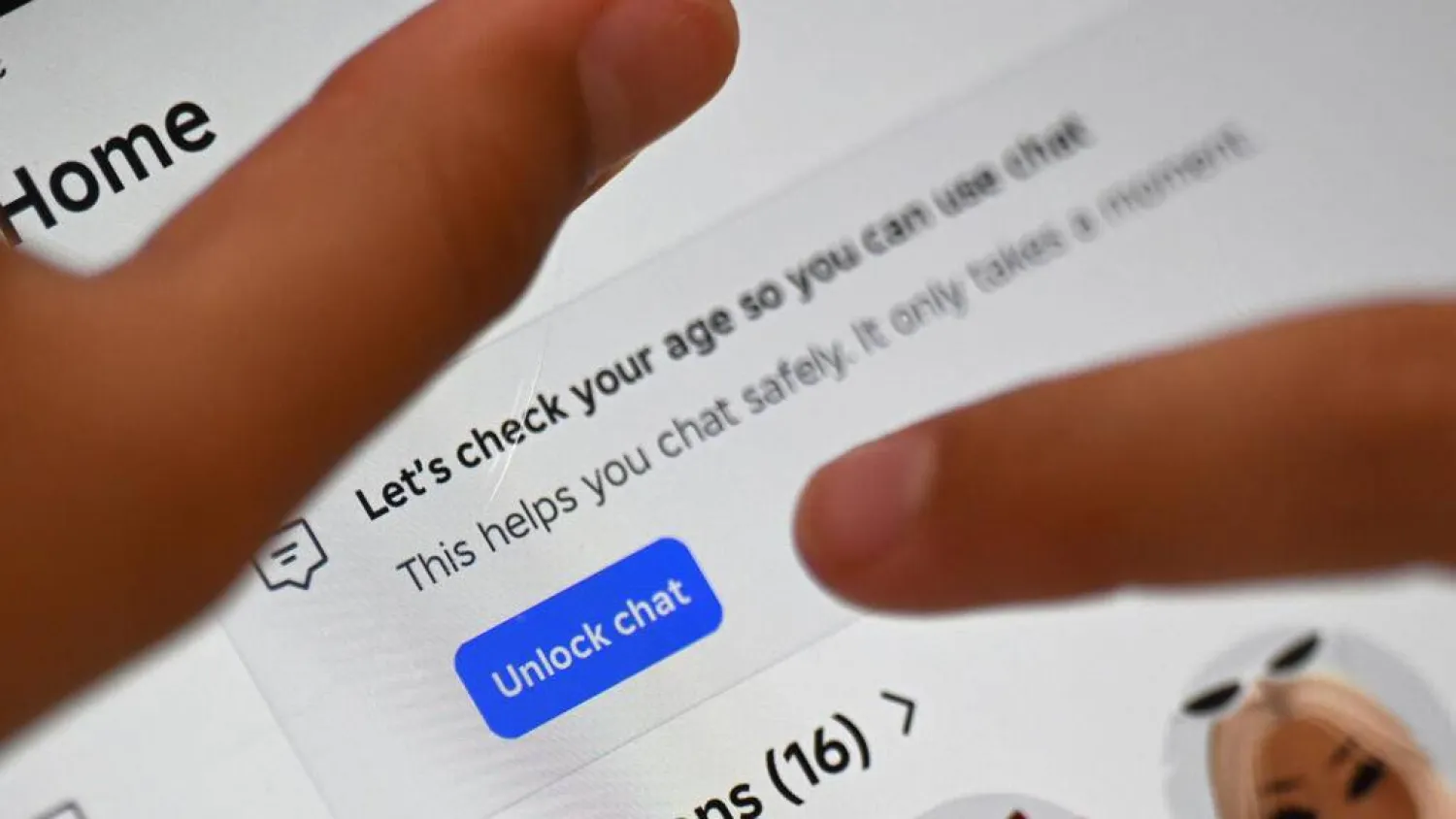

Australia has required big platforms including Meta, TikTok and YouTube to stop underage users from holding accounts since the legislation came into force on December 10 last year.

Companies face fines of Aus $49.5 million (US$33 million) if they fail to take "reasonable steps" to comply.

Billionaire Mark Zuckerberg's Meta said it had removed 331,000 underage accounts from Instagram, 173,000 from Facebook, and 40,000 from Threads in the week to December 11.

The company said it was committed to complying with the law.

"That said, we call on the Australian government to engage with industry constructively to find a better way forward, such as incentivizing all of industry to raise the standard in providing safe, privacy-preserving, age appropriate experiences online, instead of blanket bans," it said in statement.

Meta renewed an earlier call for app stores to be required to verify people's ages and get parental approval before under-16s can download an app.

This was the only way to avoid a "whack-a-mole" race to stop teens migrating to new apps to avoid the ban, the company said.

The government said it was holding social media companies to account for the harm they cause young Australians.

"Platforms like Meta collect a huge amount of data on their users for commercial purposes. They can and must use that information to comply with Australian law and ensure people under 16 are not on their platforms," a government spokesperson said.

Meta said parents and experts were worried about the ban isolating young people from online communities, and driving some to less regulated apps and darker corners of the internet.

Initial impacts of the legislation "suggest it is not meeting its objectives of increasing the safety and well-being of young Australians", it said.

While raising concern over the lack of an industry standard for determining age online, Meta said its compliance with the Australian law would be a "multilayered process".

Since the ban, the California-based firm said it had helped found the OpenAge Initiative, a non-profit group that has launched age-verification tools called AgeKeys to be used with participating platforms.