Samsung Electronics released its Galaxy XR extended reality headset on Tuesday, counting on AI features from Google to propel it into the nascent and uncertain market of computing-on-your-face that is dominated by Meta and Apple.

The headset, resembling those made by others such as Meta, will cost $1,799, or about half of what Apple charges for its Vision Pro headset.

It is the first of a family of new devices, powered by the Android XR operating system and artificial intelligence, in a long-term partnership with Alphabet's Google and Qualcomm.

"There's a whole journey ahead of us in terms of other devices and form factors," said Google's vice president of AR/XR Sharham Izadi in an interview ahead of the launch.

Up next will be the release of lighter eyeglasses, executives said, declining to elaborate. Samsung has announced partnerships with Warby Parker and South Korea's Gentle Monster luxury eyewear.

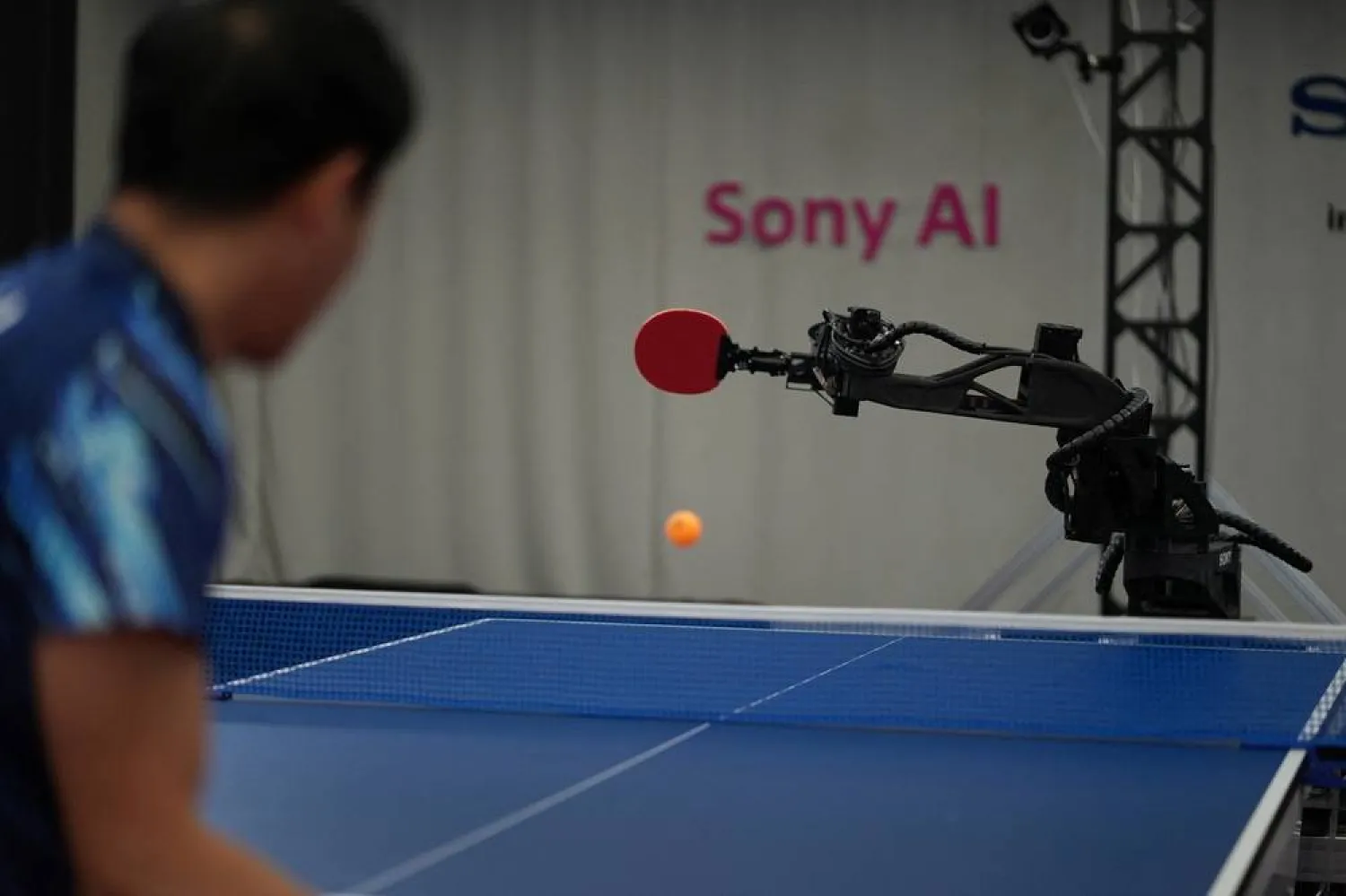

The race to find new form factors for entertainment and computing, underpinned by AI, has fueled a battle among the biggest technology companies. Instagram-owner Meta overwhelmingly dominates the VR headset industry with about an 80% market share, with Apple trailing behind.

ChatGPT-maker OpenAI is also diving into the market and spent $6.5 billion to buy iPhone designer Jony Ive's hardware startup io Products in May to figure out devices in the AI age.

Samsung has studied the extended reality segment for the past 10 years, and it was not until about four years ago that the company approached Google to jointly develop the project, codenamed "Moohan," meaning "infinite" in Korean, said Jay Kim, executive vice president at Samsung's mobile division.

"We have been agonizing over when to bring the product to the market, and considering various factors such as technology evolution and market situation, we believe that now is the best timing," he said at a briefing in Seoul on Wednesday.

USING GOOGLE AI STRENGTH

The long-awaited Samsung Galaxy XR, first demonstrated last year, combines virtual reality and mixed reality features. The goggles immerse users watching videos, such as on Alphabet's YouTube, or playing games and viewing pictures, while also allowing users to interact with their surroundings.

The latter feature takes advantage of Google's Gemini service, which can analyze what users are seeing and offer directions or information about real-world objects by looking and circling objects with their fingers.

In an interview last week, executives from Google and Samsung discussed how they believe extended reality headsets, which have yet to ignite mass consumer interest, would benefit greatly from the application of Google's powerful multimodal AI features throughout the device that can process information from different types of data such as text, photos and videos.

It's a set of software capabilities that Apple has yet to demonstrate, despite rolling out an updated Vision Pro with a more powerful chip.

"Google entering the fray again changes the dynamic in the ecosystem," said Anshel Sag, principal analyst at Moor Insights & Strategy, noting that Google's software added $1,000 in value to the device by some estimates. "Google really wants people to get the full experience of Gemini when using this headset."

Customers who buy the device this year will receive a bundle of free services including 12 months of access to Google AI Pro, YouTube Premium, Google Play Pass and other specialized XR content, the companies said.

The prototype for AI-enhanced goggles was ready by the time Apple had launched its Vision Pro headset in 2024, executives said, as they sought to enhance existing applications like YouTube and Google Photos and Google Maps, while creating new immersive experiences.

Like many first generation technologies, it attempts to do multiple things that could have consumer and enterprise applications.

Qualcomm is providing its Snapdragon XR2+ Gen 2 chip to power the headset.

DIFFICULT MARKET

Many tech CEOs have been seduced by what they say is the next big thing in personal computing, but the market remains tiny by tech standards.

Research firm Gartner estimated the global Head-Mounted Display market is expected to rise by 2.6% from this year to $7.27 billion next year. Lighter, eyeglass-type AI devices such as Meta's smartglasses made in collaboration with EssilorLuxottica Ray-Bans are expected to drive most of this growth.

Despite the expanding competitive landscape, the global virtual reality market, which includes so-called "mixed reality" headsets launching more recently, has faced three consecutive years of decline. Weakening again, shipments in 2025 are expected to fall 20% year on year, according to research firm Counterpoint.

"With a potentially more competitive price point than Apple’s Vision Pro, Samsung’s Project Moohan headset could emerge as a strong contender in the premium VR segment, particularly within the enterprise market," Counterpoint senior analyst Flora Tang.

The Galaxy XR is the first Android XR device. But Samsung has dabbled with face-mounted computing devices dating back a decade, involving slipping a smartphone into a headset, called the Gear VR, in partnership with VR headset maker Oculus. Meta acquired Oculus in 2014.